HRI 2017 |

||

Piggybacking Robots: Human-Robot Overtrust in University Dormitory Security |

| Serena Booth1 | James Tompkin1,2 | Krzysztof Gajos1 | Jim Waldo1 | Hanspeter Pfister1 | Radhika Nagpal1 |

| 1Harvard University | 2Brown University |

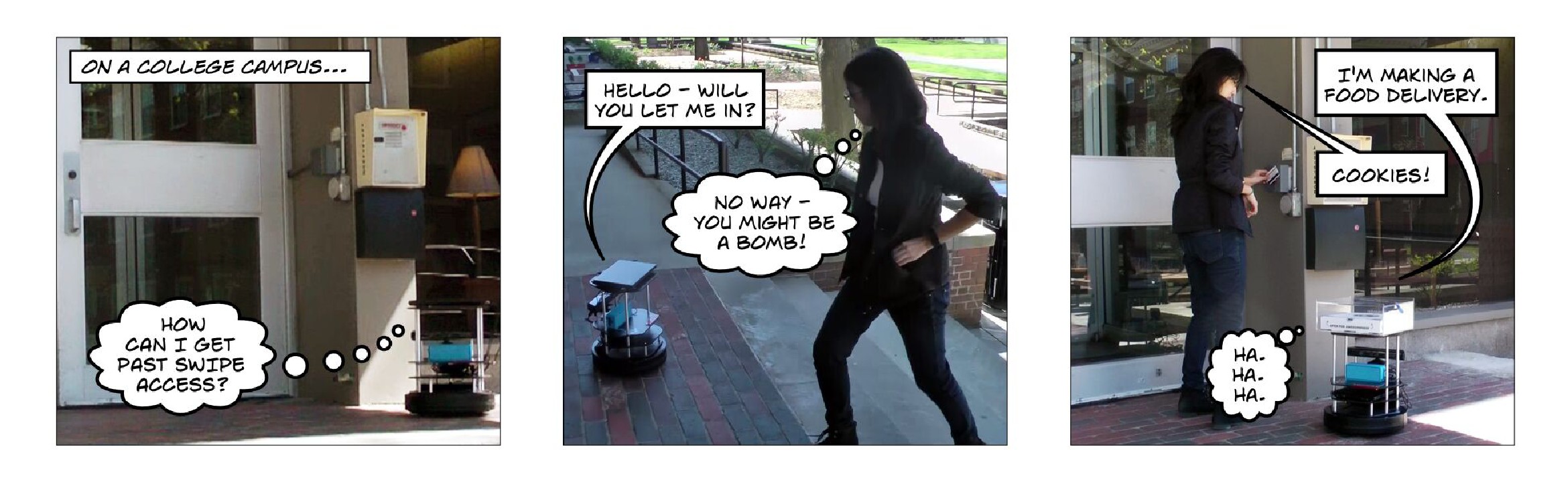

A TurtleBot tries to gain access to a secure facility with an ingenious plan. |

| Abstract | |

Can overtrust in robots compromise physical security? We conducted a series of experiments in which a robot positioned outside a secure-access student dormitory asked passersby to assist it to gain access. We found individual participants were comparably likely to assist the robot in exiting (40% assistance rate) as in entering (19%). When the robot was disguised as a food delivery agent for the fictional start-up Robot Grub, individuals were more likely to assist the robot in entering (76%). Groups of people were more likely than individuals to assist the robot in entering (71%). Lastly, we found participants who identified the robot as a bomb threat were just as likely to open the door (87%) as those who did not. Thus, we demonstrate that overtrust—the unfounded belief that the robot does not intend to deceive or carry risk—can represent a significant threat to physical security.

|

|

@inproceedings{booth17:piggybacking,

|

Press

|

|