|

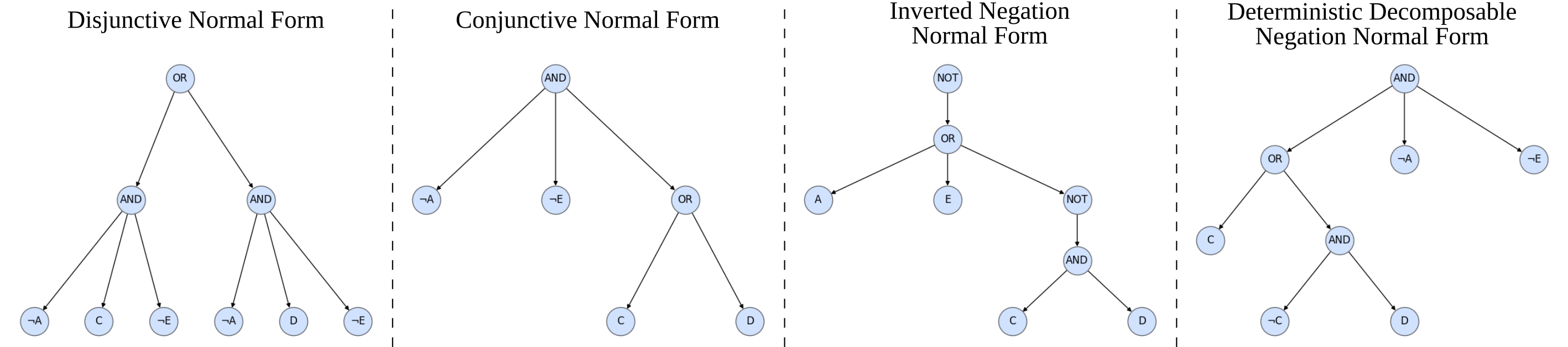

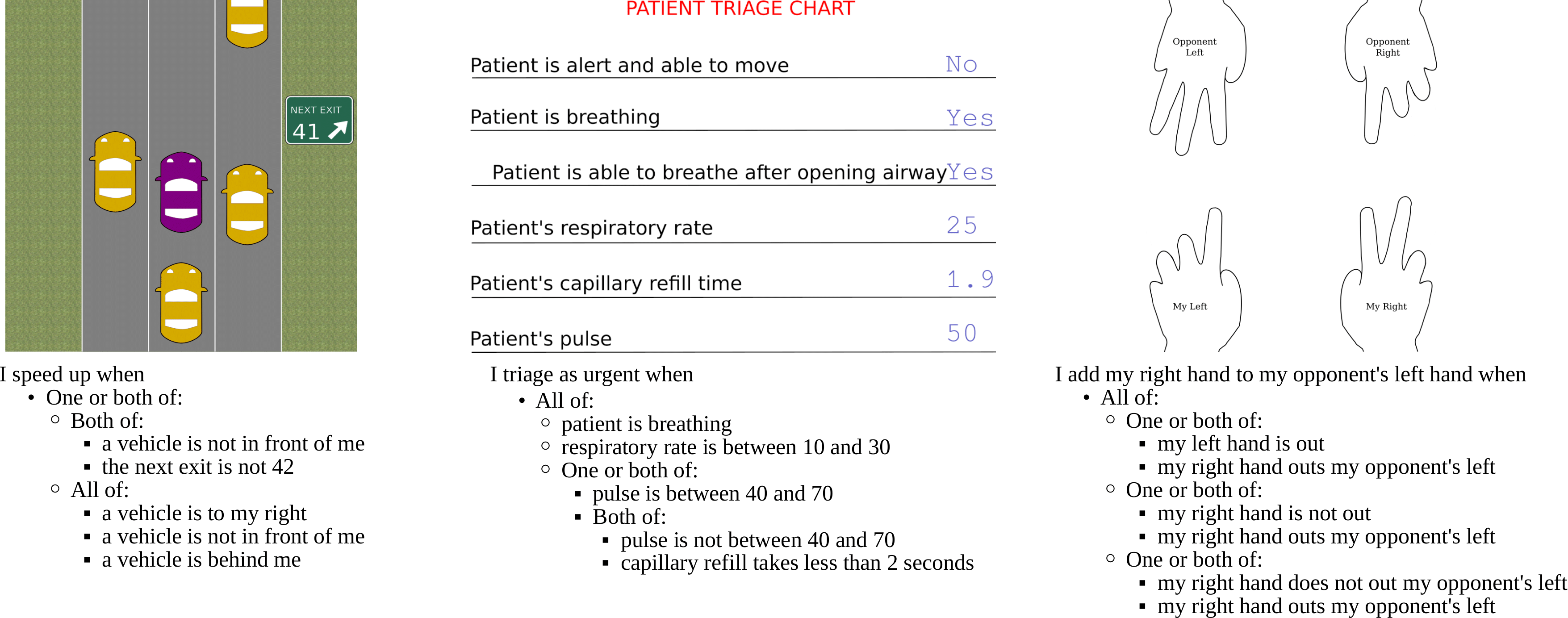

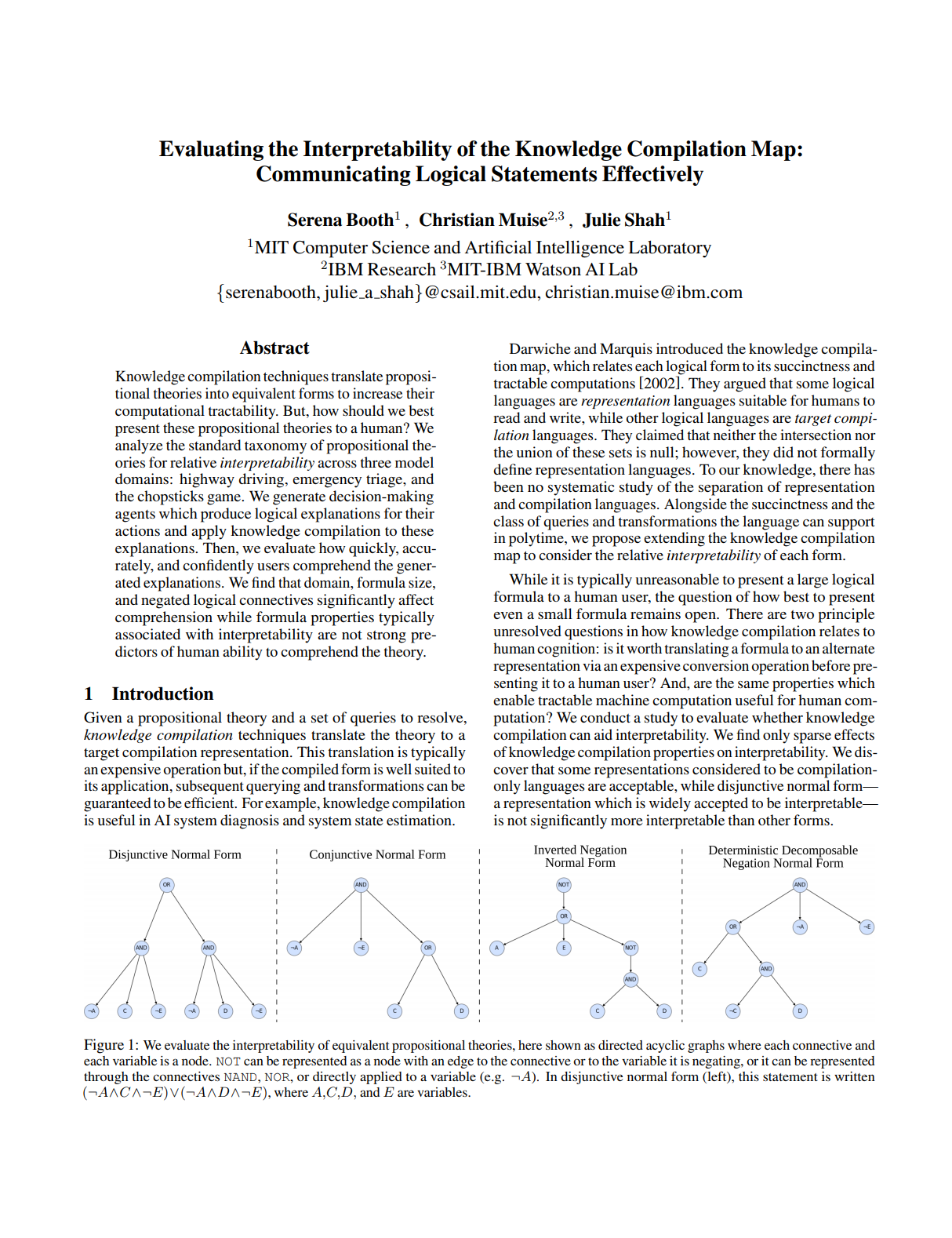

Knowledge compilation techniques translate propositional theories into equivalent forms to increase their computational tractability. But, how should we best present these propositional theories to a human? We analyze the standard taxonomy of propositional theories for relative interpretability across three model domains: highway driving, emergency triage, and the chopsticks game. We generate decision-making agents which produce logical explanations for their actions and apply knowledge compilation to these explanations. Then, we evaluate how quickly, accurately, and confidently users comprehend the generated explanations. We find that domain, formula size, and negated logical connectives significantly affect comprehension while formula properties typically associated with interpretability are not strong predictors of human ability to comprehend the theory.

|