AAAI 2021 |

||

Bayes-TrEx: a Bayesian Sampling Approach to Model Transparency by Example |

| Serena Booth*,1 | Yilun Zhou*,1 | Ankit Shah1 | Julie Shah1 |

*Equal Contribution |

||

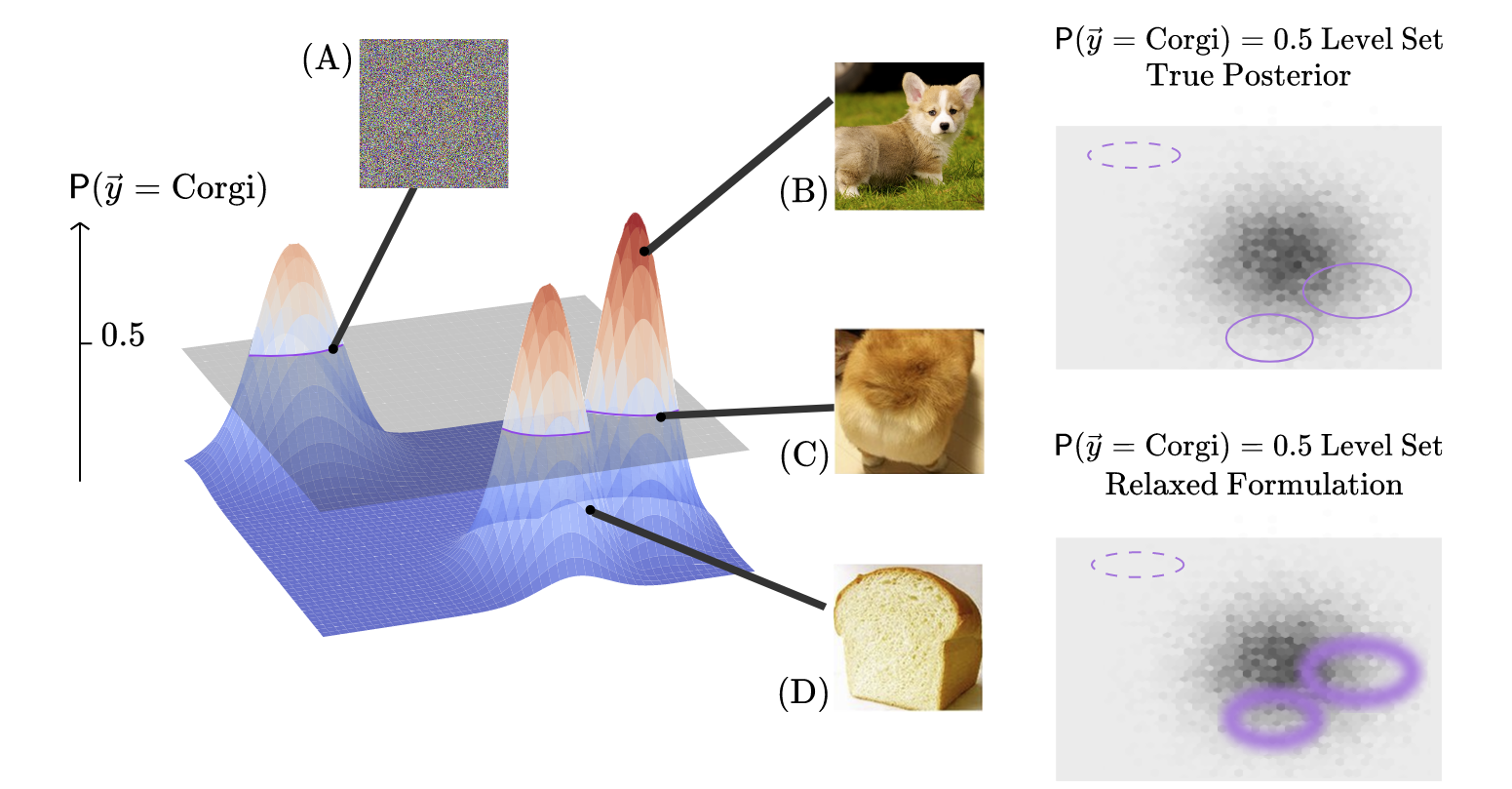

Left: Given a Corgi/Bread classifier, we generate prediction level sets, or sets of examples which trigger a target prediction confidence (e.g., p(Corgi) = p(Bread) = 0.5). Perturbing an arbitrary image to trigger the target confidence is one way of finding such examples, as shown in (A). However, such examples give little insight into the typical model behavior because they are unrealistic and unlikely. For more insight, Bayes-TrEx explicitly considers a data distribution (grayshading on the righthand plots) and finds in-distribution examples in a particular level set (e.g., likely Corgi (B), likely Bread (D), or ambiguous between Corgi and Bread (C)).

Top right: the classifier level set of p(Corgi) = p(Bread) = 0.5 overlaid on the data distribution. Example (A) is unlikely to be sampled by Bayes-TrEx due to near-zero density under the distribution, while example (C) is likely to be sampled. Bottom right: Sampling directly from the true posterior is infeasible, so we relax the formulation by “widening” the level set. By using different data distributions and confidences, Bayes-TrEx can uncover examples that invoke various model behaviors to improve model transparency. |

| Abstract |

Post-hoc explanation methods are gaining popularity for interpreting, understanding, and debugging neural networks. Most analyses using such methods explain decisions in response to inputs drawn from the test set. However, the test set may have few examples that trigger some model behaviors, such as high-confidence failures or ambiguous classifications. To address these challenges, we introduce a flexible model inspection framework: Bayes-TrEx. Given a data distribution, Bayes-TrEx finds in-distribution examples with a specified prediction confidence. We demonstrate several use cases of Bayes-TrEx, including revealing highly confident (mis)classifications, visualizing class boundaries via ambiguous examples, understanding novel-class extrapolation behavior, and exposing neural network overconfidence. We use Bayes-TrEx to study classifiers trained on CLEVR, MNIST, and Fashion-MNIST, and we show that this framework enables more flexible holistic model analysis than just inspecting the test set. Code is available at https://github.com/serenabooth/Bayes-TrEx.

|

|

@inproceedings{booth21:bayestrex,

|